Happy Monday! ☀️

Welcome to the 142 new hungry minds who have joined us since last Monday!

If you aren't subscribed yet, join smart, curious, and hungry folks by subscribing here.

📚 Software Engineering Articles

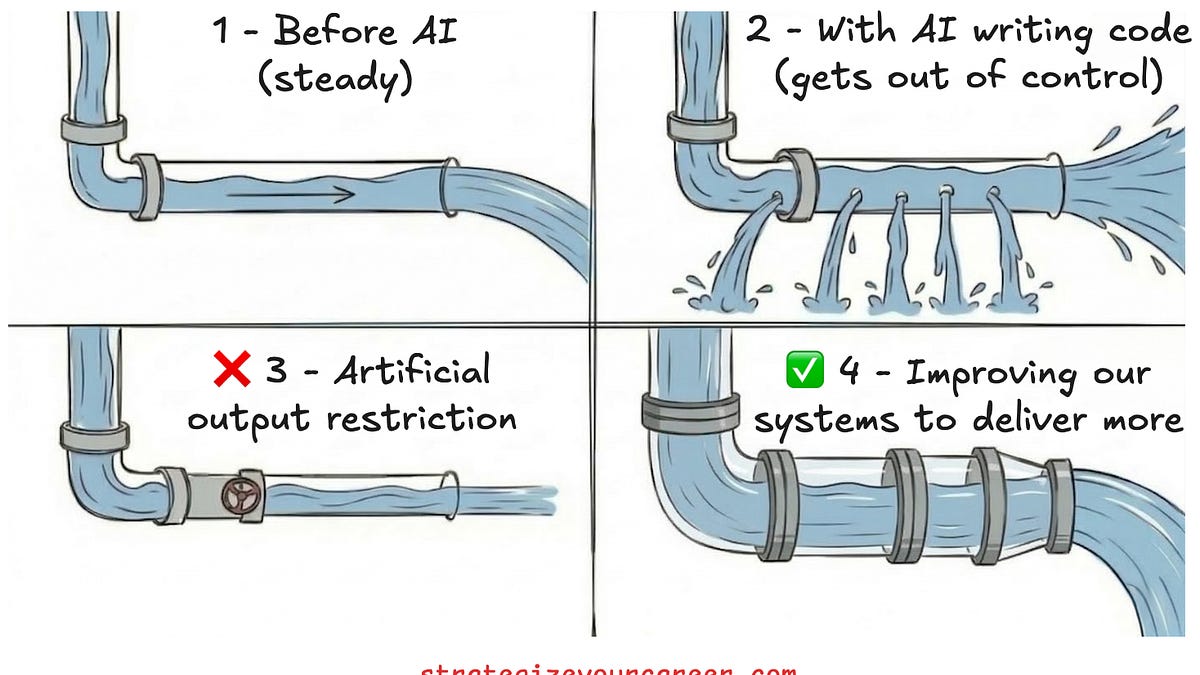

Scaling software engineering with AI unlocks new productivity

Screaming architecture & colocation makes projects self-documenting

Native E2E test reliability goes beyond fixing broken tests

LinkedIn scales LLM ranking with SGLang efficiently

Master 114 system design concepts like a pro

🗞️ Tech and AI Trends

Amazon addresses AI-related outages with internal deep dive

Meta delays new AI model over performance concerns

Google Maps redesigned with biggest AI upgrade

👨🏻💻 Coding Tip

Use idempotent Lambda handlers with deduplication keys to prevent duplicate processing from retries and async invocations

Time-to-digest: 5 minutes

Spotify Wrapped Archive identifies your five most remarkable listening days from the year and generates personalized, AI-written narratives about them. Imagine discovering that your wedding day was also your biggest music listening day—and getting a creative story about it. That's what Wrapped Archive delivers to hundreds of millions of users globally.

The challenge: Generate 1.4 billion unique, creative LLM reports in days without hallucinations, stereotypes, or timezone bugs—while keeping costs feasible and quality consistent at scale.

Implementation highlights:

Heuristic-driven day detection: Built a priority-ranked system of eight detection rules (biggest listening day, most nostalgic, biggest discovery day, etc.) to narrow millions of events into five standout days per user

Two-layer prompt engineering with evaluation loops: System prompts defined the creative contract; user prompts removed ambiguity by including listening logs, stats, and context. Three months of iterative testing and human review shaped every prompt iteration

Model distillation for economics: Fine-tuned a smaller, faster model on "gold" reference outputs from frontier models, then layered Direct Preference Optimization (DPO) using A/B-tested human feedback to preserve quality while slashing costs

Lock-free concurrency through schema design: Stored each day's report as a separate column qualifier (YYYYMMDD), eliminating race conditions and coordination overhead when writing five concurrent reports per user

Proactive pre-scaling and synthetic load tests: Hours before global launch, pre-scaled infrastructure and ran regional load tests to warm caches and connection pools—because reactive scaling can't keep up when millions hit simultaneously

Results and learnings:

Massive scale, zero hiccups: Generated and served 1.4 billion reports flawlessly during a single global launch spike with no gradual rollout

Automated quality at billion-scale: Used LLM-as-a-judge evaluation on 165k sampled reports to catch failures like timezone bugs before they reached users, then traced problems back to source and bulk-remediated affected reports

Data modeling beats complex logic: The most elegant solution to concurrency wasn't clever code—it was thoughtful schema design that made unsafe operations impossible

Spotify's approach reveals a truth about AI at scale: the LLM call is the easy part. The real work lives in capacity planning, fault isolation, safety loops, and the unglamorous engineering that makes magic feel effortless to users.

ESSENTIAL (time finally makes sense)

Temporal: The 9-year journey to fix time in JavaScript

ARTICLE (wasm glow up)

Making WebAssembly a first-class language on the Web

ARTICLE (click click crawl)

Crawl entire websites with a single API call using Browser Rendering

ARTICLE (loop de loop confusion)

The Middle Loop

ESSENTIAL (databases hate change)

Refactoring Databases Is a Different Animal

ARTICLE (unix does ai now)

A Unix Manifesto for the Age of AI

ARTICLE (compiler drama)

Against query based compilers

ARTICLE (hire different energy)

Startups Should Evaluate Engineers Differently From Big Companies

ARTICLE (ai security go brr)

Patch Me If You Can: AI Codemods for Secure-by-Default Android Apps

Want to reach 200,000+ engineers?

Let’s work together! Whether it’s your product, service, or event, we’d love to help you connect with this awesome community.

Brief: Meta has delayed the release of its Avocado A.I. model to May after the foundational model underperformed rivals like Google and OpenAI on internal tests for reasoning, coding and writing, forcing leadership to consider temporarily licensing Google's Gemini instead.

Brief: AWS introduced a managed OpenClaw deployment on Lightsail to simplify AI agent setup, but the viral project faces critical security vulnerabilities including remote code execution via CVE-2026-25253, with over 42,900 exposed instances across cloud platforms, 20% malicious packages in its skill registry, and widespread credential theft risks affecting enterprise deployments.

Brief: Apple is postponing its smart home display (code-named J490) from spring 2025 to later this year as it races to complete a new AI-powered Siri assistant, which is critical to the device's core functionality.

Brief: Google Maps rolls out Ask Maps, a Gemini-powered chatbot for trip planning and location queries, plus Immersive Navigation with 3D views and smarter route previews that show overpasses and landmarks to help drivers better understand upcoming turns and destination details.

Brief: The gap between AI-native founders shipping at superhuman speeds and corporate executives still viewing LLMs as toys is widening rapidly—as AI amplifies existing talent, the traditional bell curve of worker performance is splitting in half, making yesterday's reliable competence increasingly obsolete as the floor for acceptable output rises to 10x engineer levels.

This week’s tip:

Implement idempotent Lambda handler design using request deduplication keys and DynamoDB conditional writes to prevent duplicate processing from retries and async invocations, using composite sort keys for request versioning. Lambda's at-least-once delivery semantics mean handlers execute multiple times for the same logical request, requiring application-level deduplication rather than relying on infrastructure guarantees.

Wen?

Async event processing with retries: Queue-to-Lambda or SNS-to-Lambda workflows where exponential backoff retries must not duplicate orders, payments, or user state mutations.

SagA pattern coordination: Orchestrate multi-step workflows across microservices where Lambda invokes Step Functions; dedup keys ensure step idempotency even if an invocation is retried.

Webhook ingestion: Accept third-party webhooks with explicit delivery retry semantics; dedup keys prevent duplicate user sign-ups or subscription updates from repeated webhook deliveries.

Success is liking yourself, liking what you do, and liking how you do it.

Maya Angelou

That’s it for today! ☀️

Enjoyed this issue? Send it to your friends here to sign up, or share it on Twitter!

If you want to submit a section to the newsletter or tell us what you think about today’s issue, reply to this email or DM me on Twitter! 🐦

Thanks for spending part of your Monday morning with Hungry Minds.

See you in a week — Alex.

Icons by Icons8.

*I may earn a commission if you get a subscription through the links marked with “aff.” (at no extra cost to you).